Reducing the size of neural networks is essential for improving efficiency, especially in resource-constrained environments. This project implemented and compared three pruning methods—L1-norm filter pruning, activation-based pruning, and sparsity-informed adaptive pruning (SAP)—to evaluate their impact on model sparsity, accuracy, and overall performance.

For L1-norm based pruning, filters with the smallest weight magnitudes were iteratively removed, followed by fine-tuning after each pruning cycle. Activation-based pruning removed neurons with consistently low average activations during training, using fixed and variable thresholds to evaluate stability across pruning runs. The third method, SAP, used the PQ Index (PQI) to estimate sub-network compressibility and adaptively determine pruning amounts per iteration, following the approach described by Diao et al. (2023). All methods were tested across a range of sparsity levels and fine-tuned to recover performance post-pruning.

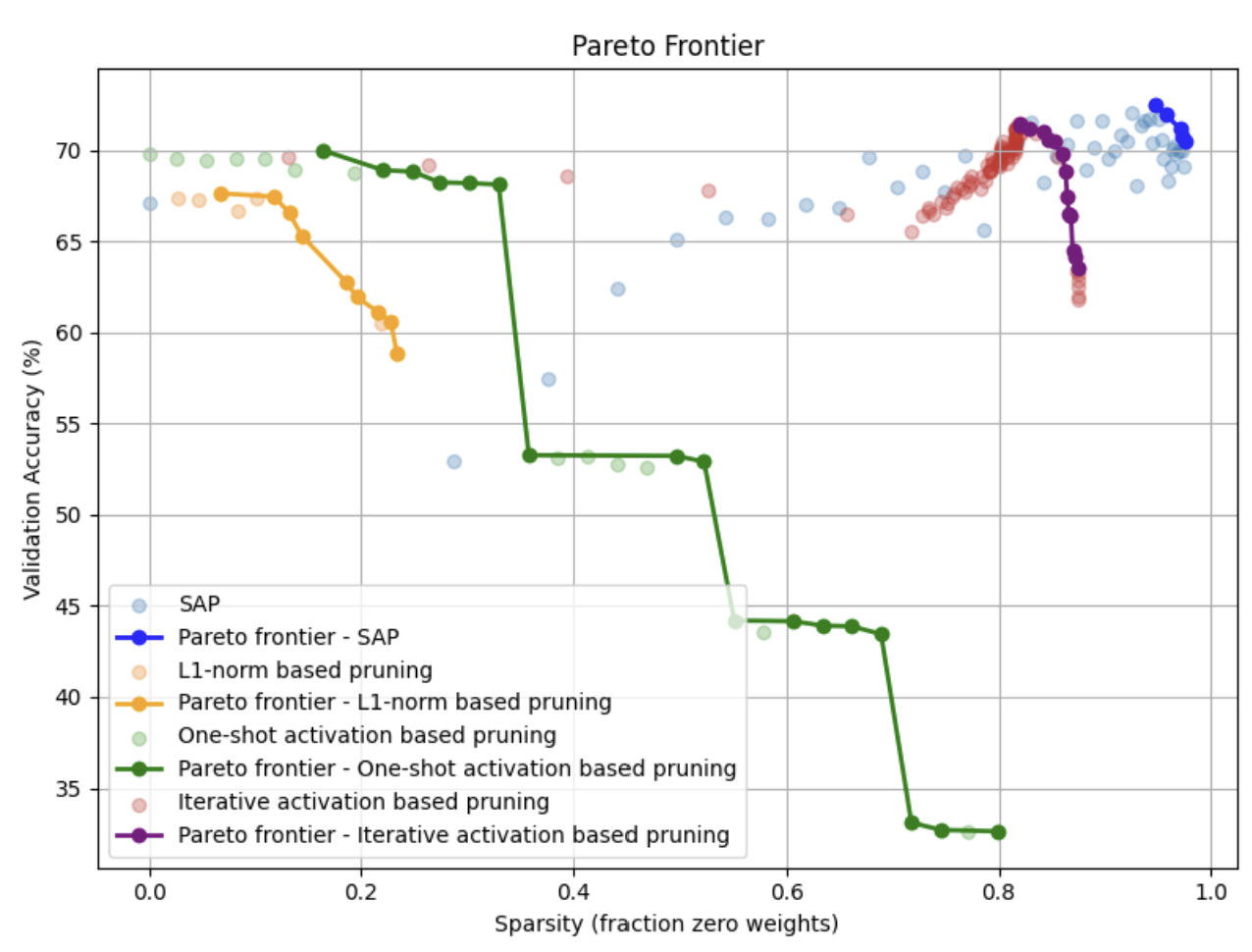

Performance Comparison: Pareto Frontier

SAP achieved the best overall trade-off, with 96.43% sparsity and 70.84% accuracy, leading to a final score of 0.8345. Iterative activation-based pruning followed closely, maintaining 69.82% accuracy at 85.94% sparsity. L1-norm pruning was the most straightforward to implement and performed well at lower sparsity levels but showed a sharp drop in accuracy beyond 30% sparsity. SAP consistently outperformed other methods on the sparsity–accuracy curve and dominated the Pareto frontier.

This project highlighted key trade-offs in pruning strategies. L1-norm pruning is simple but less effective at higher compression rates. Activation-based pruning provided better parameter targeting, especially in dense linear layers, by leveraging functional activation patterns. SAP proved most robust by adapting pruning intensity to network structure. The results emphasized the importance of iterative fine-tuning and demonstrated that not all weights are equally redundant. Overall, this work deepened our understanding of structured vs. unstructured pruning, and the importance of adaptive, data-informed strategies in neural network compression.