Heuristic Model-Scaling Projection for Learning Rate Estimation

10605 Machine Learning with Large Datasets

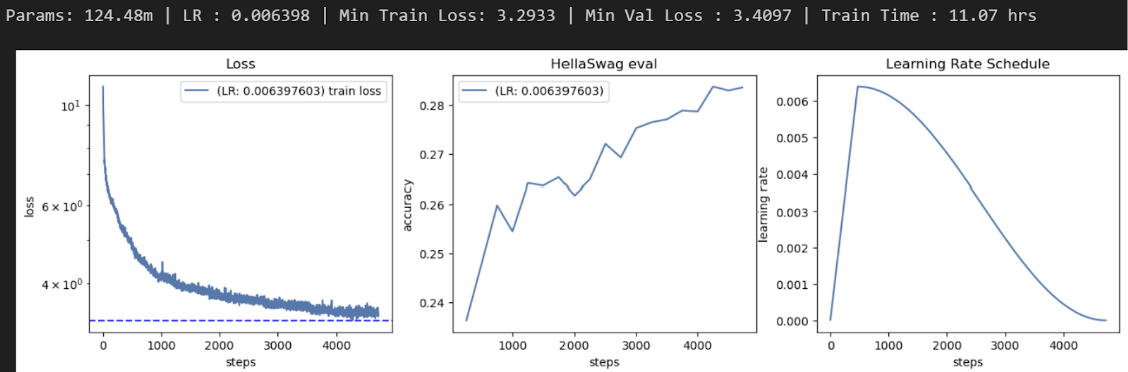

Training large-scale models like GPT-2 from scratch is computationally expensive, making it impractical to run full-scale hyperparameter sweeps. This project explores a zero-shot hyperparameter tuning strategy using Heuristic Model-Scaling Projection (MSP) to estimate optimal learning rates at large model sizes. The goal was to identify the optimal learning rate for a 124M parameter GPT-2 model by training significantly smaller models and extrapolating from their performance trends.

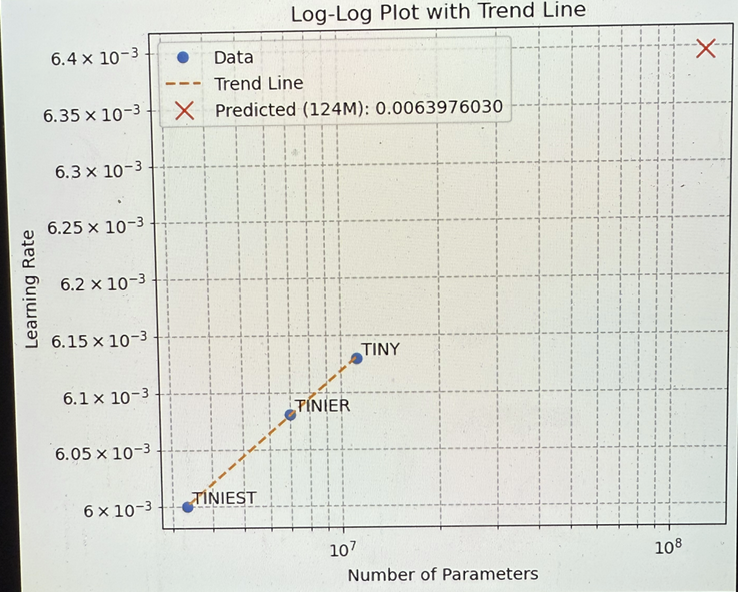

I used a log-uniform sampling strategy to test 39 different learning rates ranging from 10e-4 to 10e-1. Each sampled learning rate was evaluated by training a simplified GPT-2 model (1 transformer layer, 64 channels, 1 attention head) for 1000 steps. After identifying the best learning rates at this smallest scale, I repeated this process for two larger models: one with 2 layers, 128 channels, and 2 heads (Tinier), and one with 3 layers, 192 channels, and 3 heads (Tiny). I recorded the best learning rate for each size, then plotted these points against model parameter count on a log-log scale. A regression line was fitted through the three data points to model the functional relationship between model size and optimal learning rate. This line was used to extrapolate the predicted optimal learning rate for the 124M-parameter GPT-2 Small model.