Gesture Recognition Based Screen Control

24-782 Machine Learning and Artificial Intelligence for Engineers

Hand gesture recognition (HGR) is an intuitive and increasingly relevant interface for human-computer interaction, with applications ranging from AR/VR to assistive technology. However, many high-performing systems rely on depth sensors or optical flow, which increase latency, cost, and complexity. This project aimed to develop a lightweight, real-time gesture recognition pipeline that operates solely on RGB video input, enabling accurate screen control with minimal computational overhead. By combining MediaPipe Hands for landmark extraction with a Slow–Fast temporal convolutional network (TCN), we built a system designed for everyday environments and commodity hardware.

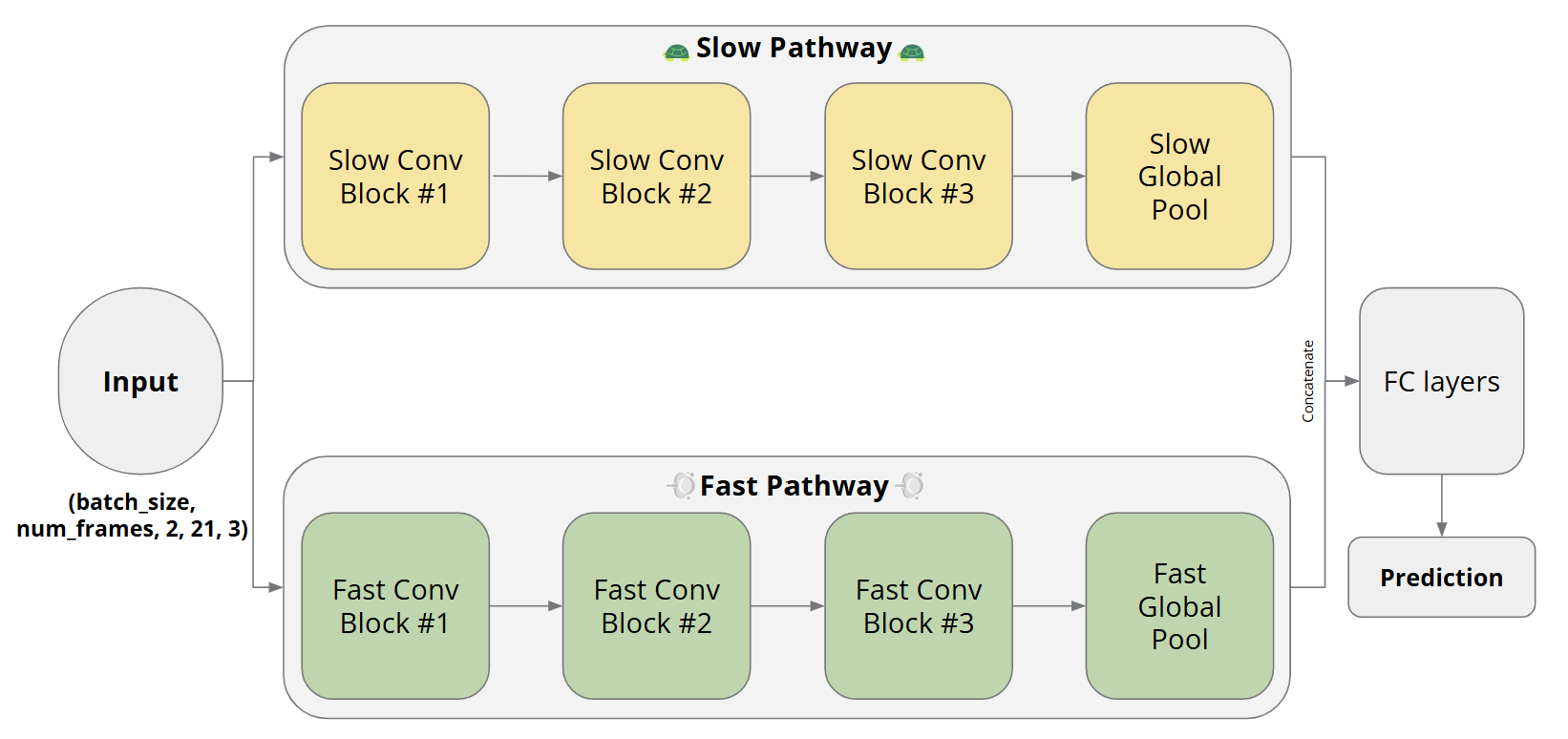

We used the IPN Hand dataset and a custom seven-class screen control dataset as benchmarks. MediaPipe Hands provided frame-wise keypoints, which were fed into a dual-path Slow–Fast CNN for temporal gesture classification. The slow path captured long-term dynamics, while the fast path responded to short-term motion. We trained the model first on the IPN dataset and then fine-tuned it on clean and messy versions of our custom dataset to adapt to real-world screen-control gestures. The model was implemented using PyTorch, with training governed by learning rate scheduling and iterative fine-tuning. Performance was compared against the IPN benchmark model using RGB-only and RGB+flow inputs.