Multilingual RAG-Powered Research Assistant with Paper Recommendations

14825 Generative AI and LLM Final Project

Finding relevant academic research can be time-consuming, especially across domains and languages. This project aimed to build a multilingual research assistant using a Retrieval-Augmented Generation (RAG) pipeline to synthesize domain-specific knowledge and recommend academic papers. The goal was to streamline early-stage research by combining semantic search with LLM-generated summaries in the user’s preferred language.

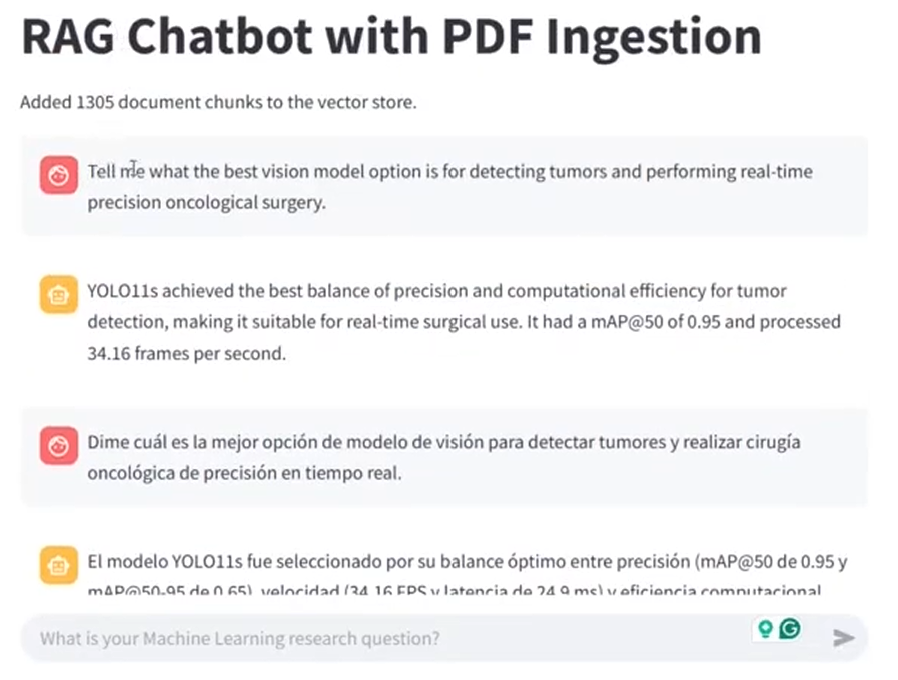

I built a web application using Streamlit that allows users to enter natural language queries, select a research domain, and optionally receive paper recommendations. The application supports 5–10 languages, ensuring that output matches the input language. I used FlowiseAI and LangChain to design the RAG pipeline and orchestrate the components of the system. I embedded 20 academic papers into ChromaDB for domain-specific semantic search. When a user submits a query, the system retrieves relevant passages and combines them with LLM-generated responses to produce a synthesized answer. I also compared multiple embedding models and large language models to evaluate pipeline performance. The application was deployed to the cloud and made publicly accessible.